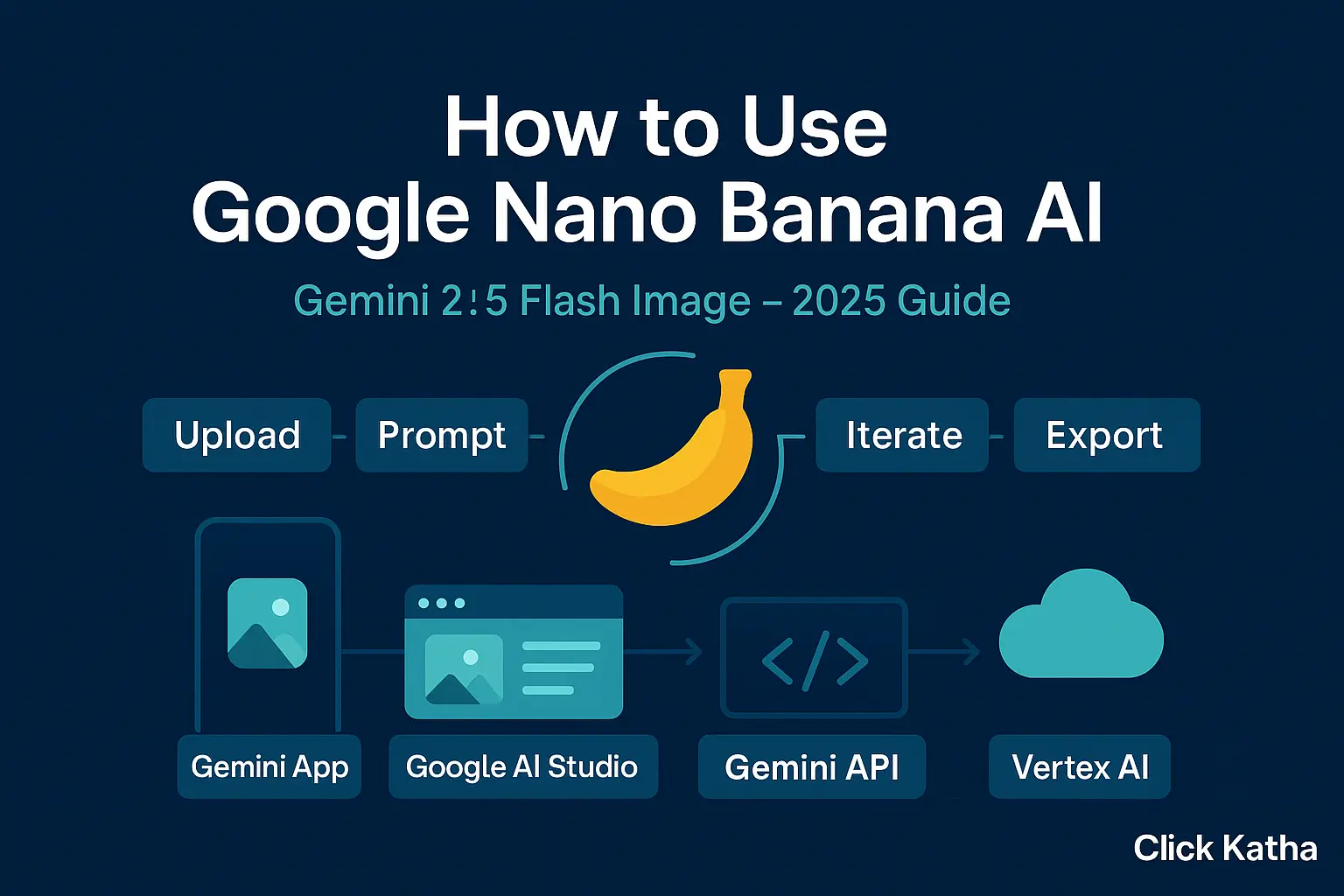

How to use Google Nano Banana AI: the complete 2025 guide

“Nano Banana” is the internal nickname for the image editing/generation model now released as Gemini 2.5 Flash Image.

Introduction on How to use Google Nano Banana AI: the complete 2025 guide

If you’ve been waiting for rock-solid, repeatable edits from an image tool, this is the moment. How to use Google Nano Banana AI is the question everyone’s asking since Google folded the “nano-banana” model into Gemini 2.5 Flash Image. It keeps faces, pets, and products consistent across edits, blends multiple references, and follows plain-English instructions. In this guide, we’ll show how to use Google Nano Banana AI in four practical ways: the Gemini app, Google AI Studio, the Gemini API, and Vertex AI for production. We’ll also cover pricing notes, safety labels, and prompt tips so you can ship with confidence.

What is “Nano Banana” in plain terms?

“Nano Banana” is the internal nickname for the image editing/generation model now released as Gemini 2.5 Flash Image. It’s built to maintain subject and style consistency while you iterate—change the background, outfit, lighting, or camera angle without warping the person or product. All outputs are marked with SynthID (an invisible watermark) for transparency. Knowing this helps when deciding how to use Google Nano Banana AI responsibly in brand and ad workflows.

Option 1 — The Gemini app (fastest way to start)

If you want a no-setup route for how to use Google Nano Banana AI, start in the Gemini app (web or mobile):

Open Gemini and select image editing.

Upload a photo (or two+ if you plan to blend styles/objects).

Give a clear instruction, e.g., “Keep the person the same, put them in a studio with soft light, add a teal backdrop. Keep the watch unchanged.”

Iterate: ask for a new background, different pose, or a subtle retouch—Gemini will keep the core identity consistent.

Check the watermark: outputs carry SynthID by default.

This is the simplest, “show me now” path for teams exploring how to use Google Nano Banana AI without touching code.

Option 2 — Google AI Studio (no-code + exportable code)

Google AI Studio is a browser playground that lets you try Gemini 2.5 Flash Image and then export working code to Node.js or Python. It’s the most forgiving environment for learning how to use Google Nano Banana AI with repeatability.

Steps:

- Visit AI Studio, pick Gemini 2.5 Flash Image (Preview).

- Create a prompt and attach reference images (e.g., your model photo + a fabric texture).

- Use multi-image fusion to blend elements, and run variants until you’re happy.

- Click “Get code” to export (Node/Python) and move the same prompt into your app.

AI Studio also highlights the safety watermarking note and model availability. If you’re documenting how to use Google Nano Banana AI for teammates, screenshots and exported snippets from AI Studio are perfect.

Option 3 — Gemini API (Node & Python)

When you’re ready to automate, the Gemini API gives you programmatic control. It’s cost-effective for batches—Google lists image output at about $0.039 per 1024×1024 image (based on token pricing). Below is a minimal pattern you can adapt as you scale how to use Google Nano Banana AI in production.

Node.js (ESM)

import { GoogleGenerativeAI, SchemaType } from "@google/generative-ai"; const genAI = new GoogleGenerativeAI(process.env.GOOGLE_API_KEY); const model = genAI.getGenerativeModel({ // Gemini 2.5 Flash Image (Preview) model: "gemini-2.5-flash-image-preview" }); const prompt = ` Keep the subject identical. Replace background with a soft studio teal. Add a gentle rim light. Keep the wristwatch unchanged. `; const result = await model.generateImages({ prompt, // Optionally attach references: // images: [{ inline { base64Image, mimeType: "image/png" } }] }); const { image } = result.response; // base64 image

Python

from google import generativeai as genai import base64 genai.configure(api_key=os.environ["GOOGLE_API_KEY"]) model = genai.GenerativeModel("gemini-2.5-flash-image-preview") prompt = """ Keep the subject identical. Replace background with a soft studio teal. Add a gentle rim light. Keep the wristwatch unchanged. """ resp = model.generate_images(prompt=prompt) img_b64 = resp.images[0].inline_data.data

Use environment variables for keys, and store prompts in your repo so your team shares the same “recipe.” This is the cleanest way to formalise how to use Google Nano Banana AI across multiple projects.

Option 4 — Vertex AI (enterprise deployment)

If you need IAM, service accounts, logging, and regional hosting, Vertex AI is the answer. It exposes Gemini 2.5 Flash Image with the same core abilities—multi-image fusion and character consistency—plus Cloud controls your CIO will love. It’s the gold-standard route for regulated teams documenting how to use Google Nano Banana AI within existing Google Cloud projects.

High-level steps:

- Create/choose a GCP project, enable Vertex AI.

- Grant a service account the Vertex permissions; store the key in your secret manager.

- Choose a region (e.g., us-central1), then call the image model from your server or worker.

- Wire logging and quotas, set budget alerts.

- Ship behind review gates if you want human-in-the-loop approvals.

Google’s Cloud blog and docs outline the model’s consistency and fusion features on Vertex AI, while pricing lives on the Vertex AI pricing page. For many teams, this is where how to use Google Nano Banana AI becomes “how we run it every day.”

Prompt recipes that actually work

Consistency is the headline feature, so make prompts that protect identity and allow style changes:

- Lock identity: “Keep the person/pet/product identical. Preserve facial features, proportions, and branded details.”

- Control the scene: “Replace background with a clean studio teal. Soft key light. Subtle rim light.”

- Blend references: “Apply the texture from Image B to the jacket in Image A.”

- Iterate in small steps: Ask for one change at a time (pose, lighting, backdrop).

These habits make how to use Google Nano Banana AI predictable for teams and clients. Google reiterates the model’s consistency and blending capabilities across its launch posts and docs.

Pricing, safety labels, and usage notes

- Pricing (API): outputs are metered by tokens; a 1024×1024 image is roughly $0.039. Budget for retries and variants during creative review.

- Watermarking: all generated/edited images include SynthID, an invisible marker.

- Policies: mind content rules and rights for any source images you upload.

If your procurement team asks “Is our plan for how to use Google Nano Banana AI compliant?”, point them to Google’s safety pages and watermarking notes.

When to pick each path

- Gemini app: fast, hands-on edits; creators and marketers. Best for learning how to use Google Nano Banana AI in minutes.

- Google AI Studio: no-code prototyping and shared prompt libraries; export code. Ideal for internal demos of how to use Google Nano Banana AI.

- Gemini API: integrate into your web app, CMS, or pipeline.

- Vertex AI: enterprise guardrails, IAM, observability, SLAs.

Troubleshooting (quick fixes)

- It changed the person’s look. Re-state identity constraints up top: “Keep face, hairstyle, and accessories identical.”

- Colors drift between versions. Add exact color words or hex values for backgrounds and props.

- Edges look soft. Ask for “crisper edges” or “increase micro-contrast on clothing texture.”

- Layout moved. Specify “do not move subject position; keep centered.”

Iteration is part of how to use Google Nano Banana AI. Short, targeted edits beat one mega-prompt.

Practical use cases to ship now

- E-commerce: seasonal catalog refresh without reshoots; maintain the same product while swapping scenes.

- Brand kits: mascots or ambassadors that look the same across dozens of placements.

- Real estate & staging: change décor and light without losing the room’s layout.

- Learning content: step-by-step visuals that remain consistent slide to slide.

Google’s materials highlight multi-image fusion and identity stability—the two pillars that make these use cases dependable. Both matter when you outline how to use Google Nano Banana AI for stakeholders.

FAQ

Is Nano Banana only in the API?

No—it's in the Gemini app for everyday use, Google AI Studio for prototyping, the Gemini API for developers, and Vertex AI for Cloud deployments. This spread is central to how to use Google Nano Banana AI across teams.

Can I blend multiple photos?

Yes. The model supports multi-image fusion and style mixing so you can combine objects, textures, and backgrounds while keeping identity intact. A core skill in how to use Google Nano Banana AI is choosing good references.

Are images labelled as AI-generated?

Yes. Images carry an invisible SynthID watermark by default.

How much does it cost?

Via Gemini API, a 1024×1024 output is about $0.039. Vertex AI pricing is listed separately on Google Cloud’s page. Google AI for DevelopersGoogle Cloud

Wrap-up

You now know how to use Google Nano Banana AI in the Gemini app for quick wins, in Google AI Studio for painless prototyping, through the Gemini API for automation, and on Vertex AI for enterprise scale. If you embed these paths in your creative stack, How to use Google Nano Banana AI becomes less “new feature” and more “new standard.”